Hello together,

I'm currently migrating an old SOAP application with DBF tables to REST API using SQL Server.

During performance testing, I'm seeing a significant degradation with the REST API and JSON.

I'm testing locally on my development PC and using the Web Connection Web Server v8.4.

Client:

lcParameter = '{"TableName":"' + tcTableName + '","Parameter":"' + tcParameter + '","id":' + Num2C( tnID ) + ',"UseXML":' + Num2C( lnUseXML ) + '}'

lcJSON = goHttp.POST( gcURL + 'GetData.wc', lcParameter )

or zipped XML

lcCompressedJSON = goHttp.POST( gcURL + 'GetData.wc', lcParameter )

lcJSON = GZipUncompressString( lcCompressedJSON )

Server: Web Connection Web Server v8.4

*-- Get data from SQL-Server

lnReturn = goWCServer.oSQL.Execute( lcCommand, 'cursSelectData' )

*-- JSON with CURSOR to JSON

luData = CREATEOBJECT( "EMPTY" )

ADDPROPERTY( luData, "Request", "cursor:cursSelectData" )

...

RETURN( luData )

*-- XML

CURSORTOXML( 0, 'lcXML', 3, 0, 0, '1' )

JSONService.isRawResponse = .T.

Response.ContentType = "text/xml"

Response.BinaryWrite( lcXML )

or zipped XML

lcCompressed = GZipCompressString( lcXML, 1 )

Response.BinaryWrite( lcCompressed )

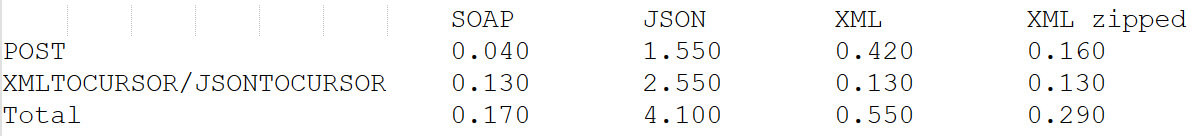

Duration on the client

Are the speed issues due to my WestWind development environment setup? I read something about filein/fileout.

How can I increase the speed?

Why is using JSON so much slower, even with POST? The CURSORTOJSON function only takes 0.006 seconds on the server.

Might be a few things:

First, JSON tends to be more verbose, especially if you run it in debug mode so much of this may be due to the size of the data travelling over the wire. Data also doesn't compress as small as XML. For Json in JsonSerializer non-debug mode makes a big difference as it strips out the extra white space that causes both overhead and many extra bytes of content especially for large results.

You're really looking at two separate issues: Serialization and data transfer and these are dependent on each mechanism.

The fact that the time goes down enormously for either mechanism with compression seems to indicate that issue is mainly the data transfer that's slow - not the generation of the data. So the JSON data likely is much larger than the XML data (formatted JSON output most likely).

Even so, CursorToXml() uses native FoxPro library code (ie. it's C++ code) so that'll be highly efficient, while the JSON cursor generation runs dynamic, reflective code that has to evaluate and convert all the types as it goes so that will always be a lot slower. Also depends on the data types - some types like strings are much more efficient - numbers and dates are very inefficient in JSON (probably also in XML but again handled in native code).

If JSON (or XML) generation is something you look at for performance you should probably reevaluate the size of the data you are returning and whether that is really necessary. Most likely your data set returned is very large (or you have very slow hardware) to take that long for serialization.

Hello Rick,

Might be a few things: First, JSON tends to be more verbose, especially if you run it in debug mode so much of this may be due to the size of the data travelling over the wire. Data also doesn't compress as small as XML. For Json in JsonSerializer non-debug mode makes a big difference as it strips out the extra white space that causes both overhead and many extra bytes of content especially for large results.

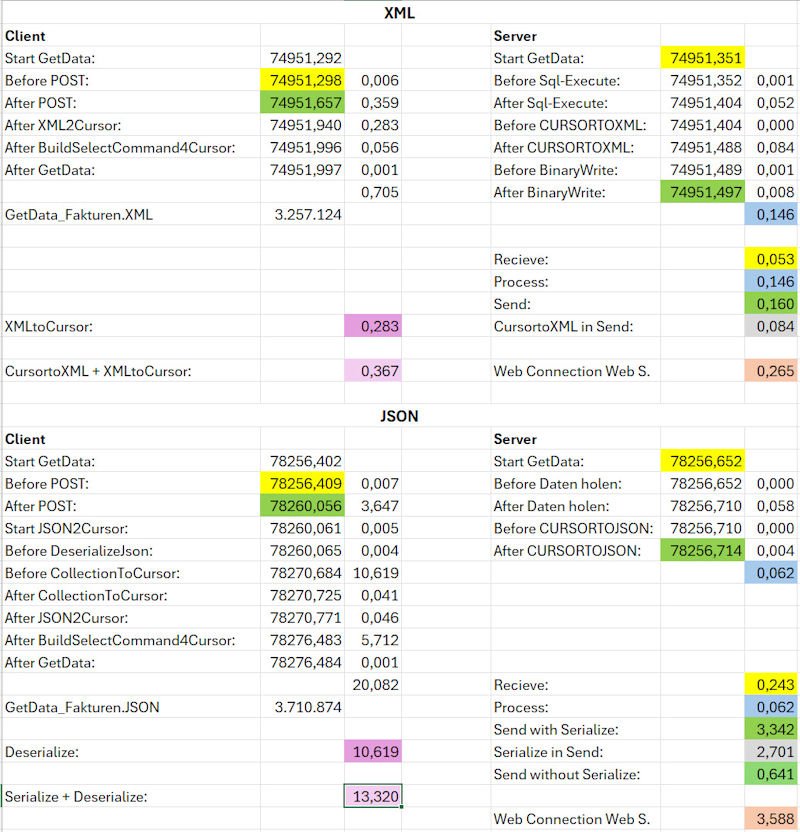

I conducted a new and very detailed test.

If JSON and XML are created on the server side as FLAT without CR and LF, they will be approximately the same size.

JSON: 3711 kB

XML: 3257 kB

Cursor from SQL-Server as a comparison: 1844 kB

You're really looking at two separate issues: Serialization and data transfer and these are dependent on each mechanism.

Yes, I can imagine that.

Here are the conversion times.

CURSORTOXML: 0,084 s

XMLTOCURSOR: 0,283 s

Serialize JSON: 2,701 s

Deserialize JSON: 10,619 s

The fact that the time goes down enormously for either mechanism with compression seems to indicate that issue is mainly the data transfer that's slow - not the generation of the data. So the JSON data likely is much larger than the XML data (formatted JSON output most likely).

No, see above, not much bigger.

Even though the send time is now 0.641 seconds instead of 0.160 seconds, this runtime is still acceptable.

Even so, CursorToXml() uses native FoxPro library code (ie. it's C++ code) so that'll be highly efficient, while the JSON cursor generation runs dynamic, reflective code that has to evaluate and convert all the types as it goes so that will always be a lot slower. Also depends on the data types - some types like strings are much more efficient numbers and dates are very inefficient in JSON (probably also in XML but again handled in native code).

Creating an XML file and back to a cursor takes 0.367 seconds

compared to creating a JSON file and back to a cursor taking 13.320 seconds.

That's a huge difference.

If JSON (or XML) generation is something you look at for performance you should probably reevaluate the size of the data you are returning and whether that is really necessary. Most likely your data set returned is very large (or you have very slow hardware) to take that long for serialization.

Yes, it can easily end up being a 5 MB XML file.

Just to reiterate, it's not a web application but an intranet application with access to an SQL server

via a REST API using WestWind Web Connection Server.

... or you have very slow hardware

Even though my workstation is over 5 years old, I don't think an AMD Ryzen 9 5950X with 128 GB of RAM and

Samsung 990 Pro or 980 Pro SSDs is very slow hardware.

Therefore, I use now XML instead of JSON for data transfer from the server to the client.

The Excel spreadsheet of the evaluation:

My point is this:

The bigger the data, the slower it gets regardless of mechanism used, but XmlToCursor and CursorToXml has the huge advantage that it's running native C++ code. There's no built in object serialization in FoxPro though, so try serializing a hierarchical object in XML. That's not going to show those same benefits (if also using FoxPro code in wwXml for example or even using the XSL Schema mechanism if you can ever actually create a schema that matches).

Performance matters but it's not everything - the reason REST is popular is because it allows for easy data shaping and easy integration into all sorts of tools, which was not (and definitely no longer is) the case with XML as that has fallen out of favor.

If you sent huge data sets over the wire using REST or XML for that matter, either you can accept the slower perf, or find some other way to pass that data around (like zipping up the DBF files and unzipping on the other end for example) or even using XML in those specific scenarios.

+++ Rick ---

FWIW I remember a long while back I did some testing of Json results vs XML results in .NET, and even there it turned out that XML was often much faster. It has something to do with how the document trees are generated and with XML they are highly optimized native tools in most libraries for XML, while JSON is mostly run through parsers.

It wasn't as drastic as what we see with what you show (because of the discrepancy between native and FoxPro code), but it's a common thing. Yet REST won regardless as the protocol that is most widely used now - nobody is implementing new XML services these days - what is out there is mostly legacy stuff.

FoxPro processing further suffers if strings get large it starts slowing down significantly with (even optimized) concatenations.

Another thought here is if you are controlling both ends of the service, you can mix and match JSON and XML - use XML for those large performance critical request, JSON for everything else.

+++ Rick ---

Another thing is that JSON doesn't have a native conversion to cursor so you're in fact running multiple commands - first deserializing into a collection and then turning the collection into a cursor. So that'll make the overhead considerably worse. FWIW, in my tests XmlToCursor() is terribly slow - slower in fact than JSON deserialization in my tests which is somewhat odd.

If you're doing cursor -> cursor you're much better off packaging the binary DBF data and shipping that over the wire, then doing any sort of serialization. You can use EncodeDbf() and DecodeDbf() for this and send the raw binary data to the server and back.

Ultimately I think the main issue is that sending multi-megabytes of data over the wire as XML or JSON is generally not a good idea - rare that APIs can actually use that data directly, and for any other purposes binary uploads are the way to go for efficiency if that matters.

+++ Rick ---

Hello Rick,

Thank you for your input.

I will test EncodeDbf() and DecodeDbf()

Finally, here are the results comparing SOAP with DBF tables versus REST API with SQL Server.

I'm glad that the speed is doubled via the REST API over the network...

Data with 4665 records => 12850 kB Cursor

SOAP:

Local: 3.921 s

Network: 5.001 s with 1 GBit

Network: 9.859 s with 40 Mbit over Internet - simulated with NetLimiter.

REST API with XML:

Local: 1.536 s

Network: 2.004 s with 1 GBit

Network: 5.596 s with 40 Mbit over Internet - simulated with NetLimiter.